|

|

马上注册,结交更多好友,享用更多功能^_^

您需要 登录 才可以下载或查看,没有账号?立即注册

x

本帖最后由 小小小小的鱼丶 于 2018-7-21 21:42 编辑

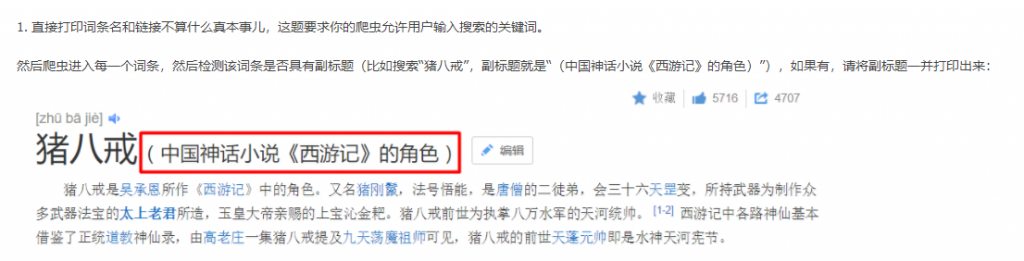

题目要求

- 源码如下:

- import urllib.request

- import urllib.parse

- import re

- from bs4 import BeautifulSoup

- def main():

- keyword = input("请输入关键词:")

- keyword = urllib.parse.urlencode({"word":keyword})

- response = urllib.request.urlopen("http://baike.baidu.com/search/word?%s" % keyword)

- html = response.read()

- soup = BeautifulSoup(html, "html.parser")

- for each in soup.find_all(href=re.compile("view")):

- content = ''.join([each.text])

- url2 = ''.join(["http://baike.baidu.com", each["href"]])

- response2 = urllib.request.urlopen(url2)

- html2 = response2.read()

- soup2 = BeautifulSoup(html2, "html.parser")

- if soup2.h2:

- content = ''.join([content, soup2.h2.text])

- content = ''.join([content, " -> ", url2])

- print(content)

- if __name__ == "__main__":

- main()

- 这一段有问题,请问应该怎么改

- url2 = ''.join(["http://baike.baidu.com", each["href"]])

- response2 = urllib.request.urlopen(url2)

- html2 = response2.read()

- UnicodeEncodeError: 'ascii' codec can't encode characters in position 36-39: ordinal not in range(128)

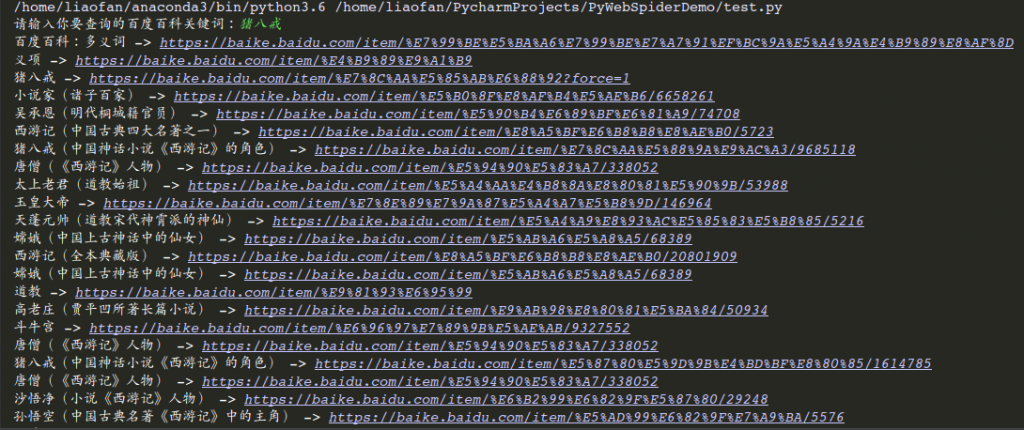

我写的代码如下:

- import urllib.request

- import re

- import time

- import urllib.parse

- headers = {

- 'User-Agent': 'Mozilla/5.0 (X11; Linux x86_64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/67.0.3396.99 Safari/537.36'

- }

- def getKeyUrl(key_word):

- init_url = 'https://baike.baidu.com/search?word=' + key_word

- req = urllib.request.Request(init_url, headers=headers)

- key_text = urllib.request.urlopen(req)

- key_html = re.findall(r'<a class="result-title" href="(.*?)"', key_text.read().decode('utf-8'), re.S)

- return key_html[0]

- def getAllKeyUrl(key_url):

- req = urllib.request.Request(key_url, headers=headers)

- key_text = urllib.request.urlopen(req)

- key_url_list = re.findall(r'href="/item/(.*?)"', key_text.read().decode('utf-8'), re.S)

- return key_url_list

- def getMainTitle(key_url_list):

- for title in key_url_list:

- url = 'https://baike.baidu.com/item/' + title

- req = urllib.request.Request(url, headers=headers)

- key_text = urllib.request.urlopen(req)

- main_title_list = re.findall(r'<h1\s>(.*?)</h1>', key_text.read().decode('utf-8'), re.S)

- copy_title_list = getCopyTitle(url)

- if copy_title_list:

- print(main_title_list[0] + copy_title_list[0] + ' -> ' + url)

- else:

- print(main_title_list[0] + ' -> ' + url)

- time.sleep(1)

- def getCopyTitle(url):

- req = urllib.request.Request(url, headers=headers)

- key_text = urllib.request.urlopen(req)

- copy_title_list = re.findall(r'<h2>(.*?)</h2>', key_text.read().decode('utf-8'), re.S)

- return copy_title_list

- if __name__ == '__main__':

- key_word = input('请输入你要查询的百度百科关键词:')

- # 楼主可以把urllib.parse.quote(key_word)换成key_word,也就是不加quote方法,你就知道了

- key_url = getKeyUrl(urllib.parse.quote(key_word))

- key_url_list = getAllKeyUrl(key_url)

- getMainTitle(key_url_list)

运行后结果为:

注意上述我给你注释的部分~~~~~~~~~

|

|

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)

( 粤ICP备18085999号-1 | 粤公网安备 44051102000585号)